What is overlooked by AI

AI sorts by probability and ignores deviations. This is precisely where research-based artist Jooyoung Oh comes in: she tracks overlooked data sets and exposes the blind spots of machine learning—with surprising insights.

Artificial intelligence often seems like some creature that learns from past data and uses it to create something new – like humans. Actually, though, AI is a technical system that recognizes recurring patterns in available data and, based on those patterns, calculates the most probable results, which correspond to a representative average of the data set it’s trained on. Deviations from these patterns tend to be overlooked. Artist Jooyoung Oh focuses on these deviations, in other words the data disregarded in AI patterns.

Overlooking deviations

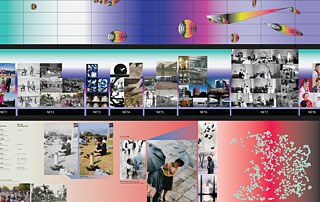

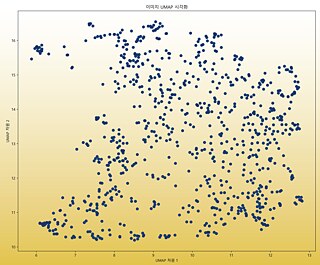

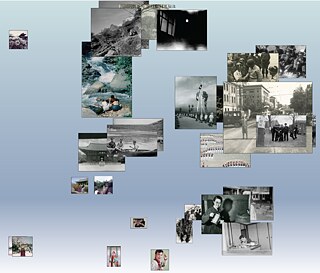

At the “Shaping AI: Ethics, Power, Responsibility” workshop held on November 28–29, 2025 at the Goethe-Institut Korea, artist Jooyoung Oh asked what happens when something doesn’t match the pattern. AI looks for patterns, she explained, and tends to marginalize whatever doesn’t fit in. Hence the key question: “What gets to stay in and what gets thrown out?” In her opinion, any debate about AI ethics should start with decisions as to which data and archival materials to take into account.At the workshop, Oh also presented her collaboration with Photo SeMA (Photography Seoul Museum of Art), for which she’d created an “image similarity network” to analyze old Korean photographs from the 1920s to the 1980s. The network puts similar images close together, and outliers far apart from the rest. The photographic works of artist Koo Bohnchang, for instance, with their signature “red dots”, were placed on the margins of the AI-recognized patterns. This is how such “unusual data” gets excluded from the “mainstream” pattern and, as a result, receives less attention in the overall structure.

Uncritical acceptance

Since AI answers usually reflect the plausible average of many possible responses, works previously presented by Koo Bohnchang were likely to “fall off the grid”. The point here is that although AI results clearly need to be challenged, they’re often unthinkingly accepted in everyday practice – a widespread habit that Jooyoung Oh seeks to call into question.Her 2025 installation Machine Appreciation System is a participatory work: viewers select and enter an image, which the AI interprets in the form of a text that reads like a review. On the face of it, it seems as though humans were giving the AI training signals. As it turns out, however, the system is based on the fact that viewers end up agreeing with the AI’s plausible critiques. Jooyoung Oh warns that experiences of this kind, especially if repeated over and over again, can undermine the human ability to make judgments ourselves and can give rise to “quasi-delusory perceptions” instead.

In search of long-lost voices

The selection of data sets may vary according to gender and social background. Jooyoung Oh’s Literary Girl 1974 Bot project ties the question of how female literature is preserved – or not – into the problem of creating data sets. Back in the days when “literate women” were considered dangerous in Korea, some girls did indeed write – but hardly any of their literary works have been preserved. Given the dearth of digital data to work with, Oh ended up digitizing their texts herself by scanning or typing out each page herself.She developed the chatbot for this project not to rework or otherwise change the original texts, but to put them back into their original form. The chatbot was programmed to respond only to questions directly related to the existing data – instead of providing a friendly answer to every question posed by the user. Oh seeks to show that technology doesn’t have to reinvent missing narratives: it can also be used to bring back long-lost voices and make them visible again without adulterating them.

Illustrations of consumer ideals

Jooyoung Oh also drew attention to the problem of image bias. She showed that AI trained on the cover photos used for girls’ magazines back in the 1970s will consistently generate images of young women that correspond to the prevailing consumer ideals of that era. But when current-day female students took pictures of themselves and submitted them for a photo contest, the subjects looked very different: they displayed a much wider variety of facial expressions and gestures and came across as far more lively and dynamic, a stark contrast to the AI-generated pictures of women in passive poses. This goes to show how much any image of women produced by an AI will be biased by the data set it was trained on. Which is why Oh insists on the need for concerted cultural and political efforts to include a wide range of perspectives – by diversifying the training data to begin with.Our responsibility

Jooyoung Oh’s presentation returns to this key question in Korea’s AI debate today: “What does AI pick – and what does it leave out?” AI learns and repeats patterns. Again, anything that doesn’t conform to these patterns tends to get sidelined. And yet, more often than not, we willingly accept the results offered up by AI technology because they seem “plausible”. In so doing, however, we risk losing our ability to use our own judgment and to take responsibility for our choices.So we need to rethink data sets in order to rediscover missing voices and incorporate divergent points of view. This is a precondition for an ethical and inclusive use of AI, and it will enable cultural resources created for the public to begin critically questioning the selection process for AI training data.

Author: Soyoung Choi

Korean Proofreading: Young-Rong Choo

Edit & Concept: Leslie Klatte

English Translation: Eric Rosencrantz

German Translation: Star Korea AG

Jooyoung Oh