The AI Decision-making Dilemma

Who’s responsible when AI makes mistakes? So far, humans have generally been to blame: somebody made a wrong decision, somebody approved it, somebody implemented it. Whenever a problem arose, it could be traced back to its source by following that chain of responsibility.

But the more AI gets a say in the decision-making process, the more opaque it will become. In future, it’s going to be increasingly difficult to figure out which data or criteria led to a given conclusion or at what point in the process human input was involved. And it’s going to become increasingly easy to buck responsibility and blame the technology: “The AI said so.”

At the workshop on “Shaping AI: Ethics, Power, Responsibility”, Professor Hyundeuk Cheon of Seoul National University addressed these aspects of AI ethics, how to regulate responsibility and why the lack of transparency is a problem.

Transparency: The key to AI ethics

Transparency is a key principle of ethical AI guidelines. Whether it’s referred to as “explainability”, “traceability” or “comprehensibility”, Cheon said, the underlying demand is the same: why a system has decided one way or another must be made clear.It is often claimed that humans may be biased, whereas technology can be more objective. Cheon cited the example of an “AI judge”. Replacing human judges with AI judges may seem fair on the face of it, but first we have to define “fairness”. Humans must decide which goals to prioritize, which errors are potentially dangerous, which data to include and which to leave out. The AI, in turn, will reflect these social value judgments.

The assumption that technology is automatically more objective is misleading and dangerous. The more a society talks about fairness, the more it will base decisions on transparent processes and metrics – though these metrics themselves are based on prior value judgments.

The responsibility vacuum of autonomous technologies

As AI becomes more autonomous, we give it greater scope to make judgments and take action itself. Examples include self-driving cars, autonomous weapons systems and agent-based AI, whose use is justified by persuasive arguments: Autonomous vehicles reduce the incidence of accidents caused by human error and improve mobility for vulnerable groups in society. Autonomous weapon systems reduce the number of casualties and the psychological stress on soldiers. Agent-based AI performs tasks faster and more efficiently.“But that’s not the whole truth,” said Professor Cheon. Greater efficiency can undermine accountability. Human beings are autonomous agents, responsible for their actions – but what happens when decisions and actions are delegated to machines? Machines have no free will, no intention and no consciousness of consequences.

So who’s to blame? In war, for instance: Who’s responsible for a drone that kills civilians: the developer, the commanding officer – or the drone itself? Developers and commanders might say, “We didn’t order that strike.” The drone itself can’t be held accountable. So this situation creates a so-called “responsibility gap”.

Cheon makes the case for an expanded conception of responsibility that considers criteria such as the chain of command as well as the preconditions for and benefits of using the system or technology in question. If we stick to a mindset of “I did it myself, so I’m responsible”, then this accountability vacuum will only grow larger because we hardly do anything all by ourselves anymore.

When black boxes invade the public sphere

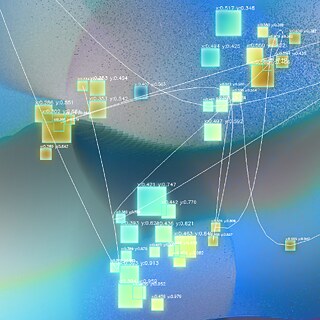

Cheon put the problem of the AI black box in a nutshell: “For the responsible use of AI, the system needs to be explainable, and this explanation must be made accessible to users.” If only the results are presented without revealing the reasoning behind them, there’s no basis for people to accept or reject those results.He also observed that “opacity” is a multifaceted problem. It includes the deliberate use of opacity by companies seeking to keep trade secrets under wraps for competitive reasons. Even after disclosure, the opacity of the decision-making processes and technical systems involved makes it difficult for laypeople to understand what’s actually going on behind the scenes. But the biggest challenge of all is that opacity makes it difficult even for developers and experts to accurately predict the outcome.

Cheon compared this situation to Kafka’s novel The Trial, in which Josef K. is arrested – and summarily executed – without being told why. This final scene shows “how easily an unexplained verdict can render people powerless”, said Cheon. When public policy decisions go unexplained, the people concerned are nonetheless excluded from the decision-making process.

Another case in point concerned the dismissal of a teacher. Sarah Wysocki, a teacher at a public elementary school in Washington, DC, was sacked after receiving a low score from a teacher evaluation system – despite her recognized excellence as a schoolteacher. All the school board could say by way of explanation was: “It’s the algorithm that computed her score.” Public policy decisions reached in this manner are then simply handed down without any justification.

Cheon added that transparency is not a matter of “courtesy”. Public policy decisions must be justified by giving reasons that are acceptable to the public. Transparency is a necessary precondition for public trust.

Transparency as a civil right

So what should we as a society demand? In Cheon’s opinion, AI used in the public sphere should be designed in such a way as to enable the public to understand how decisions are made and why. Only then can we agree, disagree, or call for a review of the proposed measures. Transparency is, again, not a matter of courtesy, but a minimum requirement for public decision-making processes.Cheon warned against leaving visions of the future to multinational technology corporations. Technology is not a natural phenomenon that overwhelms us: it is the outcome of goals that are set, concepts that are developed and implemented. The development of civil society depends on public involvement, on people speaking up and speaking out, listening to one another, and discussing problems that arise. Public policy decisions must be publicly explained, and civil society itself must assert its right to demand such explanations.

Author: Soyoung Choi

Korean Proofreading: Young-Rong Choo

Edit & Concept: Leslie Klatte

English Translation: Eric Rosencrantz

German Translation: Star Korea AG