The Danger of AI Excess

AI increasingly controls our behavior and binds us to digital services—often without us realizing the consequences. Youjin Jeon sheds light on what this development means for our autonomy and what hidden mechanisms shape modern AI systems.

AI tends to be regarded nowadays as a service and a product more than as pure technology. AI services are specially designed for long-term use, and the more regularly people use them, the more valuable they become to AI companies. AI isn’t just a neutral tool: it’s a consumer product that actually promotes overuse – and even misuse and dependence. The problem is we’ve already worked AI into the very fabric of our everyday lives without being fully aware of the potential consequences.

Misleading terminology and overblown expectations

At the “Shaping AI: Ethics, Power, Responsibility” workshop, Youjin Jeon of the Woman Open Tech Lab in Korea challenged some basic assumptions about AI: namely, the widespread belief in the neutrality of technology, the connotation of authority in the very term “artificial intelligence” and the overblown expectations aroused by the word “intelligence”. AI learns from the data it’s trained on, and if that data is biased, so are the results. Nevertheless, we often trust technology to be an objective judge. This, Jeon pointed out, is where the problem starts.“Why do we call it artificial ‘intelligence’?” Jeon kept asking. Terms like “intelligence”, “thinking”, and “understanding” may seem appropriate, but they mislead us into anthropomorphizing technology: i.e., thinking it’s somehow similar to human beings. Even the word “general” in “artificial general intelligence” (AGI) can serve as a norm to distinguish between “normal” and “abnormal”. This language gives rise to inflated expectations and fears – regardless of what the technology actually does.

Why bias is not a mistake, but a mirror

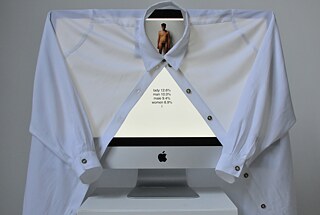

One concrete example of AI bias is Max Dovey’s 2015 “computer vision” experiment, [1] in which a computer classified a man in a suit as a “successful businessman” and a naked man as a “woman”. These weren’t just innocuous errors: they go to show how AI learns from stock images of clothed and unclothed human bodies. The bias that automatically associates nudity with femininity is, as a result, encoded in the technology.

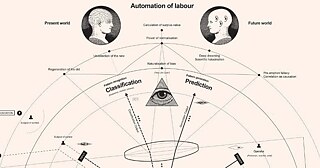

Nooscope Manifesto

How AI is altering relationships

Recent studies show that many people around the world use generative AI for “advice” and “emotional support” rather than for traditional tasks like searching or translating. [3] AI chatbots are always polite, they never contradict or interrupt the user, thereby steering clear of the conflicts and emotional friction that are integral to human relationships.Jeon pointed out that this phenomenon goes beyond mere comfort: it alters the very structure of relationships. Smooth interactions are pleasant, but they deprive us of the opportunity to learn from differences of opinion. The more optimized AI becomes in the art of “friendly” conversation, the more we come to prefer complacency to candor and truth. Jeon warned that we now run the risk of losing our appreciation for the value of authenticity.

The power of conscious consumption

Jeon also sought to raise awareness of our role as consumers of AI. We tend to be remarkably unstinting when it comes to spending time and money on our phones, subscriptions and AI tools. If it were a matter of other costly or time-consuming products or services, we’d likely express concerns about our consumption habits, or simply stop using them. But when it comes to tech, we generally give it a pass.And yet we consumers actually do have a choice: we can reduce our consumption, choose alternative services, or air criticism. Jeon refers to these practices as the approach of an “intervening user”. In an age in which using AI has become the norm, we need to be mindful of which tasks we’re delegating to machines – and which ones we ought to keep control over ourselves.

Questions for the age of AI

How do we want to live in a present age that’s already largely shaped by AI? What do we sacrifice in using AI to save time and to work more efficiently? At the end of the day, what will be left of human judgment and the ability to think for oneself, of authenticity and personal relationships?Now that AI has become part and parcel of everyday infrastructure in so many different domains all over the world, these questions concern Korean society as well as every other society that uses AI. Even if they lag behind the rapid pace of technological progress, that’s all the more reason to keep asking these questions – before it’s too late.

Author: Soyoung Choi

Korean Proofreading: Young-Rong Choo

Edit & Concept: Leslie Klatte

English Translation: Eric Rosencrantz

German Translation: Star Korea AG

Youjin Jeon